Claude Code Autonomy Grows as Users Gain Experience, Study Shows

Updated (4 articles)

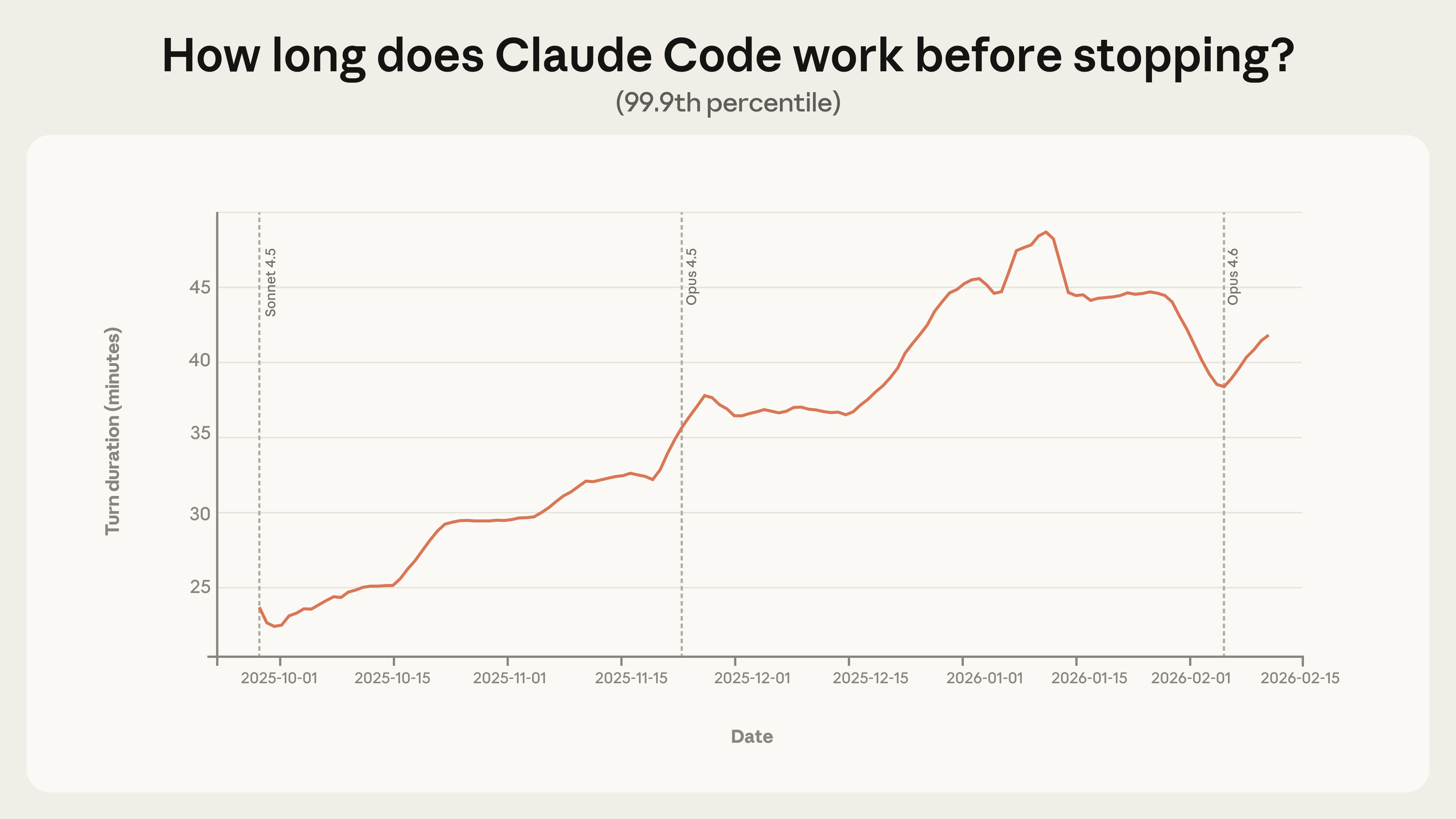

Long‑Running Sessions Nearly Double in Length The 99.9th‑percentile turn duration rose from under 25 minutes in late September 2025 to over 45 minutes by early January 2026, a smooth upward trend across model releases that suggests influences beyond raw capability [1].

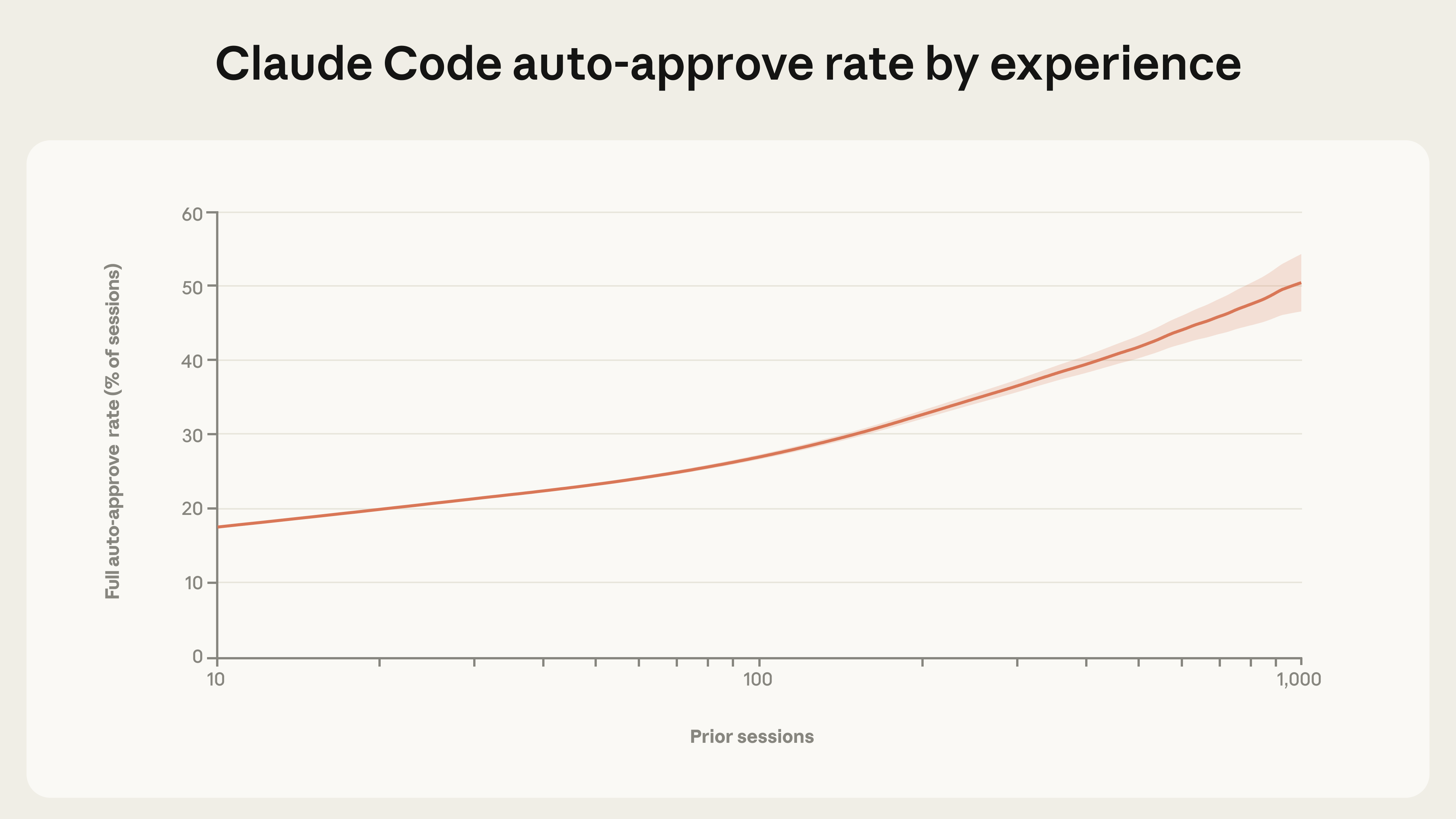

User Experience Drives Higher Autonomy and More Interrupts Auto‑approve usage climbs from roughly 20 % for newcomers to over 40 % after 750 sessions, while per‑turn interrupt rates increase from about 5 % to 9 % as users become seasoned [1].

Claude Code Requests Clarification More Than Humans Interrupt On the most demanding goals, the model’s self‑initiated pauses exceed human‑initiated interruptions by a factor of two, indicating built‑in uncertainty handling [1].

Agents Predominantly Operate in Low‑Risk Software Engineering Nearly 50 % of public‑API tool calls involve software engineering; 80 % include safeguards, 73 % retain a human in the loop, and only 0.8 % are irreversible, with emerging activity in healthcare, finance, and cybersecurity. Internal Claude Code usage shows success on the hardest tasks doubled and average human interventions fell from 5.4 to 3.3 per session, reflecting growing trust and efficiency [1].

Related Tickers

Timeline

Apr 28, 2025 – Claude Code shows far higher automation rates than Claude.ai (79% vs 49% of conversations), with distinct sub‑type patterns such as 35.8% feedback‑loop and 43.8% directive interactions, indicating a shift toward more autonomous coding tasks; developers mainly use it for front‑end web work (31% JavaScript/TypeScript, 28% HTML/CSS, 20% Python/SQL) while startups account for roughly one‑third of interactions versus only 13% from enterprises, and individual users (students, academics, hobbyists) comprise about half of all usage[4].

Aug – Dec 2025 – Internal Claude Code logs record that success on the hardest tasks doubles and average human interventions fall from 5.4 to 3.3 per session, reflecting growing trust and efficiency as agents handle more complex problems while retaining safeguards[1].

Late Sep 2025 – The 99.9th‑percentile turn duration in long‑running Claude sessions grows from under 25 minutes to over 45 minutes by early Jan 2026, a smooth trend across model releases that suggests factors beyond raw capability drive longer autonomous interactions[1].

Nov 13‑20, 2025 – Anthropic gathers one million Claude.ai conversations and one million first‑party API transcripts to create five new “economic primitives” (task complexity, skill levels, use case, AI autonomy, task success) that will quantify AI usage for its Economic Index[2].

Dec 2, 2025 – A survey of 132 engineers reports Claude now handles 60% of daily work and “boosts self‑reported productivity by 50%,” while staff feel they have become “full‑stack,” tackling front‑end, database, and API tasks they previously avoided, though some fear skill erosion and note that 27% of AI‑assisted work would not have been done otherwise[3].

Dec 2, 2025 – Internal usage logs reveal average task complexity climbs from 3.2 to 3.8, consecutive tool calls per transcript increase 116% (9.8 → 21.2), and human turns fall 33% (6.2 → 4.1), indicating Claude Code manages more autonomous, complex workflows with reduced oversight[3].

Dec 2, 2025 – Engineers shift collaboration patterns as “routine questions now go to Claude 80‑90% of the time,” reducing mentor interactions for junior staff and sparking concerns about mentorship loss and future career pathways[3].

Early Jan 2026 – Auto‑approve usage climbs from roughly 20% for newcomers to over 40% after 750 sessions, while per‑turn interrupt rates rise from about 5% to 9% as users become seasoned, showing experienced users grant more autonomy yet intervene more frequently[1].

Early Jan 2026 – Claude Code asks for clarification twice as often as humans interrupt on complex tasks, indicating built‑in uncertainty handling that prioritizes safety in high‑complexity goals[1].

Jan 15, 2026 – Anthropic’s Economic Index shows augmented interaction now exceeds automation on Claude.ai (52% vs 45%) after product updates such as file‑creation, persistent memory, and workflow‑customization, while usage remains heavily coding‑centric with the top ten tasks accounting for 24% of chats and 32% of API traffic, and “modifying software to correct errors” alone makes up 6% of chats and 10% of API calls[2].

Jan 15, 2026 – The U.S. AI‑usage Gini coefficient falls from 0.37 to 0.32, and regression models project per‑capita Claude usage could equalize nationwide within 2‑5 years—roughly ten times faster than the diffusion of past economically consequential technologies—highlighting rapid, cross‑state adoption[2].

Jan 15, 2026 – Productivity calculations adjust from an optimistic 1.8 percentage‑point annual boost to about 1.0 percentage‑point after incorporating the task‑success primitive, revealing a net deskilling effect as AI handles the most educated components of many occupations[2].

Feb 18, 2026 – Anthropic reports that agents operate mainly in low‑risk software engineering, with 50% of public‑API tool calls involving coding, 80% including safeguards, 73% retaining a human in the loop, and only 0.8% being irreversible, while emerging activity appears in healthcare, finance, and cybersecurity[1].

All related articles (4 articles)

-

Anthropic: Claude Code autonomy rises as users gain experience, study finds

-

Anthropic: Anthropic’s January 2026 Economic Index: New AI Usage Metrics and Their Economic Implications

-

Anthropic: AI‑driven productivity surge and growing pains at Anthropic

-

Anthropic: Anthropic Finds AI Coding Agent Drives Automation, Startup Adoption

External resources (50 links)

- https://economics.mit.edu/sites/default/files/2025-06/Expertise-Autor-Thompson-20250618.pdf (cited 3 times)

- https://digitaleconomy.stanford.edu/publications/canaries-in-the-coal-mine/ (cited 2 times)

- https://doi.org/10.3982/ECTA15202 (cited 1 times)

- https://doi.org/10.48550/arXiv.2412.13678 (cited 1 times)

- https://www.hbs.edu/ris/Publication%20Files/26-011_04dcb593-c32b-4e4e-80fc-b51030cf8a12.pdf (cited 1 times)

- http://claude.ai/redirect/website.v1.65a43eba-07e7-4ba4-b1de-8542f55791c3 (cited 7 times)

- https://cdn.sanity.io/files/4zrzovbb/website/55e4d2de6eb39b3a9259c3f74843f86b1a12e265.pdf (cited 7 times)

- https://academic.oup.com/qje/article-abstract/140/2/1299/7959830 (cited 2 times)

- https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/ (cited 2 times)

- https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/ (cited 2 times)

- http://claude.ai/redirect/website.v1.78b84a35-1708-4fcb-b6aa-87aeda8910b0 (cited 1 times)

- https://arxiv.org/abs/2311.02462 (cited 1 times)

- https://arxiv.org/abs/2407.01502 (cited 1 times)

- https://arxiv.org/abs/2412.13678 (cited 1 times)

- https://arxiv.org/abs/2502.02649 (cited 1 times)

- https://arxiv.org/abs/2503.14499 (cited 1 times)

- https://arxiv.org/abs/2506.12469 (cited 1 times)

- https://arxiv.org/abs/2512.04123 (cited 1 times)

- https://arxiv.org/abs/2512.07828 (cited 1 times)

- https://arxiv.org/pdf/2302.10329 (cited 1 times)

- https://arxiv.org/pdf/2401.13138 (cited 1 times)

- https://arxiv.org/pdf/2504.21848 (cited 1 times)

- https://arxiv.org/pdf/2507.07935 (cited 1 times)

- https://assets.anthropic.com/m/2e23255f1e84ca97/original/Economic_Tasks_AI_Paper.pdf (cited 1 times)

- https://assets.anthropic.com/m/6cd21f7d4f82afcb/original/Claude-at-Work-Survey.pdf (cited 1 times)

- https://claude.com/blog/create-files (cited 1 times)

- https://claude.com/blog/memory (cited 1 times)

- https://claude.com/blog/skills (cited 1 times)

- https://code.claude.com/docs/en/common-workflows#use-plan-mode-for-safe-code-analysis (cited 1 times)

- https://code.claude.com/docs/en/fast-mode (cited 1 times)

- https://code.claude.com/docs/en/monitoring-usage (cited 1 times)

- https://code.claude.com/docs/en/overview (cited 1 times)

- https://dl.acm.org/doi/book/10.5555/773294 (cited 1 times)

- https://docs.anthropic.com/en/docs/agents-and-tools/claude-code/overview (cited 1 times)

- https://github.com/anthropics/claude-code/blob/main/CHANGELOG.md (cited 1 times)

- https://github.com/anthropics/claude-code/issues/535 (cited 1 times)

- https://huggingface.co/datasets/Anthropic/EconomicIndex (cited 1 times)

- https://job-boards.greenhouse.io/anthropic/jobs/4502440008 (cited 1 times)

- https://job-boards.greenhouse.io/anthropic/jobs/4555010008 (cited 1 times)

- https://newsletter.pragmaticengineer.com/p/how-claude-code-is-built (cited 1 times)

- https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5713646 (cited 1 times)

- https://platform.claude.com/docs/en/api/overview (cited 1 times)

- https://red.anthropic.com/2026/zero-days/ (cited 1 times)

- https://simonwillison.net/2025/Sep/18/agents/ (cited 1 times)

- https://support.claude.com/en/articles/13345190-getting-started-with-cowork (cited 1 times)

- https://www-cdn.anthropic.com/e5645986a7ce8fbcc48fa6d2fc67753c87642c30.pdf (cited 1 times)

- https://www.michaelwebb.co/webb_ai.pdf (cited 1 times)

- https://www.nber.org/papers/w33509 (cited 1 times)

- https://www.nber.org/papers/w34639 (cited 1 times)

- https://www.nber.org/system/files/working_papers/w32966/w32966.pdf (cited 1 times)